For most of computing’s early history, learning to use a machine meant learning to think like one. You memorized syntax. You typed commands with precision. One wrong character and nothing worked. The machine didn’t meet you halfway, you went all the way to it.

The history of interaction design is, in its first chapter, the story of designers who decided that wasn’t good enough. That the burden of understanding should sit with the product, not the person using it. That a key principle of any well-designed system is this: it should be learnable without instruction. This piece traces how that idea took root and what it took to make it real.

Hari Nallan & Mohita Jaiswal - March 2021

What Interaction Design Actually Is

Before screens, before software, before the personal computer existed in any recognizable form, industrial designers were already grappling with the question that interaction design would eventually claim as its own: how does a person form a working relationship with a system they’ve never encountered before?

Physical affordances, that is the way a door handle signals push or pull, the way a kettle’s grip tells you where to hold it, were the original interaction design problems. The discipline’s roots aren’t in computing. They’re in the designed objects that surrounded people long before any of them touched a keyboard.

The term “interaction design” itself wasn’t coined until the mid-1980s, when Bill Moggridge and Bill Verplank gave it a name while working together at IDtwo and later IDEO. To Verplank, it was an adaptation of the computer science term “user interface design” for the industrial designers entering the field. To Moggridge, it was an improvement on “soft-face,” a term he had coined in 1984 to describe the application of industrial design thinking to products containing software.

What both were reaching for was the same idea: designing interactive systems required a different kind of thinking than engineering them. Function wasn’t enough. The relationship between the system and how the user interacts with it had to be designed deliberately.

Command Line Interfaces: The Machine's Language

The first widespread digital interaction paradigm was built entirely around the machine’s convenience, not the user’s. Command line interfaces (CLIs) required users to learn a specific syntax to get anything done. This included learning the exact commands, exact spelling, and exact structure. There was no visual guidance, no suggestion of what was possible, no recovery path that didn’t involve already knowing what you were doing.

The personal computer of the 1960s and 1970s was, by definition, a tool for specialists. Engineers, researchers, and programmers who had invested the time to learn the system’s language were rewarded with extraordinary efficiency. Everyone else was excluded, not by intent, but by design default. This exclusion was the seed of everything that followed. The friction of command line interfaces made the clearest possible case for a different approach: if technology was going to reach beyond specialists, the interface had to stop demanding users think like machines.

The GUI: Designing for How Humans Already Think

On December 9, 1968, Douglas Engelbart and his team at Stanford Research Institute staged a 90-minute public demonstration that introduced the world to the computer mouse, hypertext linking, real-time text editing, and collaborative computing — all in a single session. The audience of roughly a thousand computer professionals watched what is now called the “Mother of All Demos.” Most of what they saw that day wouldn’t become mainstream for another two decades.

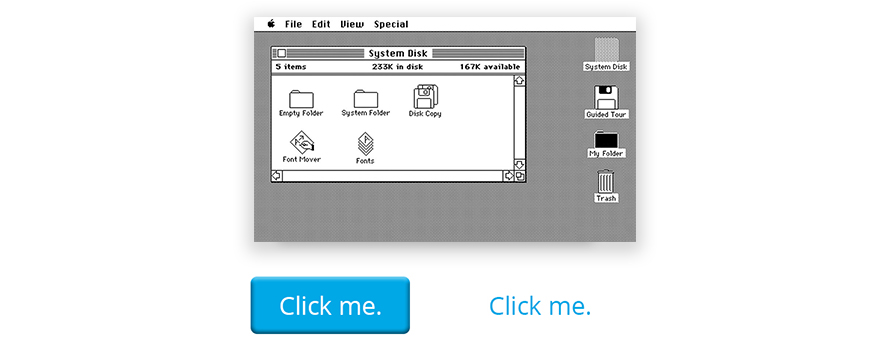

The ideas Engelbart demonstrated found their fullest early expression at Xerox PARC, where researchers developed the visual desktop metaphor, including windows, icons, menus, etc., that would eventually define the graphical user interface (GUI). Apple’s Lisa in 1983, and then the Macintosh in 1984, brought the GUI to the personal computer for the first time at a consumer scale.

The shift was philosophical as much as visual. Design principles built around human perception replaced syntax with recognition, for instance, the trash can icon that behaved like a real bin, and the folder that worked like a real folder. Users didn’t need to memorize commands. They navigated a visual space that borrowed from the physical world they already understood.

This is what made the GUI transformative: it moved the cognitive load from the user to the designer. The interface now had to do the work of communicating what was possible. User experience became a design concern because, for the first time, the design of the interface directly determined whether a person could use the product at all.

“The best interaction design doesn't teach users how to use a product. It makes the product fluent in the way humans already think.” — Deepali Saini | CEO at Think Design Collaborative

The World Wide Web: Learnability at Global Scale

The graphical user interface made the personal computer accessible. The world wide web made the problem of learnability exponentially harder.

When Tim Berners-Lee published the first web page at CERN in 1991, the interaction model it introduced was unlike anything that had preceded it. It introduced people to hyperlinks, non-linear navigation, and browser-based browsing. There was no desktop metaphor to fall back on. Users had to learn a new kind of exploration: clicking into the unknown, navigating without a map, finding their way back without a physical reference point. What made this genuinely difficult for designing interactive systems was the loss of a controlled environment. Software designers could assume a known operating system, a known screen size, and a known set of user capabilities. Web designers had none of those guarantees. The interface had to be learnable by anyone, on anything, arriving from anywhere, with no prior instruction.

The design principles that had been sufficient for desktop software had to stretch. Navigation systems, visual hierarchy, link behavior, and error states each had to communicate intent clearly enough that a first-time visitor from anywhere in the world could orient themselves without help. User experience stopped being about one product for one type of user. It became about designing for the full range of human diversity at once.

The world wide web didn’t solve learnability. It revealed just how deep the problem went and how much design thinking it would take to make technology genuinely accessible to everyone.

Every interface paradigm covered here — from command line interfaces to the GUI to the early web — was a response to the same failure: technology that worked perfectly for the people who built it and no one else.

Learnability was never fully solved in this era. The GUI was a dramatic improvement over CLI, but it still required training, still rewarded familiarity, and still excluded users who couldn’t access or afford the hardware and software that came with it. The web democratized access and immediately created new barriers, such as navigation conventions that seemed obvious to designers and baffling to first-time users.