Dark patterns used to be very easy to spot. They mostly included tricky checkboxes, confusing opt-outs, several types of “Are you sure you want to cancel?” popups that guilt you into staying, and other ways to keep users engaged. They were visible, UI tricks that most people could point to and say “this feels off,” but might be missed by the untrained eye.

2026 is different. Now, dark patterns are starting to look very different. With generative AI, large language model-powered products, and AI-driven experiences everywhere, the manipulation doesn’t just sit in user interfaces anymore. It’s embedded in how AI-based digital ecosystems talk to us, what they decide to show (or quietly hide), and which paths they keep nudging us towards over the long term. This shift makes dark patterns less about a single deceptive screen and more about how advanced AI behaves over time—across products, channels, and even entire supply chains and decision workflows.

Stuti Mazumdar - February 2026

“Every ‘smart’ interaction is also a design decision about power—who has it, and who doesn’t.” —Deepali Saini | CEO at Think Design Collaborative

What Dark Patterns Look Like in an AI-First World

In an AI-first world, dark patterns aren’t always loud. Sometimes they come wrapped in “helpful” suggestions, “personalised” recommendations, and “smart” defaults that persuade users to take an action or choose one option over the other. The surface looks neutral; the underlying intent is often not.

Now, AI tools are trained on massive amounts of processed data, including your clicks, bounce rates, purchase history, social media behavior, etc. Hence, digital products now, powered by AI and machine learning models, can predict what you’re likely to do next and, crucially, what might make you do something you weren’t planning to. It helps strategists understand what it takes to alter their next decision. The design choices are no longer only made in Figma. They’re also made inside AI models that decide:

- Which offer or perk you see first

- How strongly or passively a message is framed

- Whether something is framed as “limited,” “recommended,” or “normal”

On the contrary, old dark patterns were one-size-fits-all. New AI-enabled dark patterns are personalised. Two people could look at what seems like the same interface and, because of different underlying AI-powered frameworks, be experiencing very different levels of persuasion or friction across the experience. Some early work on AI-driven manipulation has already shown how optimisation for engagement can quietly morph into optimization for influence, especially when systems are rewarded for keeping users hooked, not necessarily for helping them.

The Shift From Static Tricks to Behavioural Systems

- A pre-checked option during a “friction-less” check-out

- A “No, I prefer to stay uninformed” cancel button

- “Only 1 item left” messages that never really expire

So, what are we seeing more of? It is:

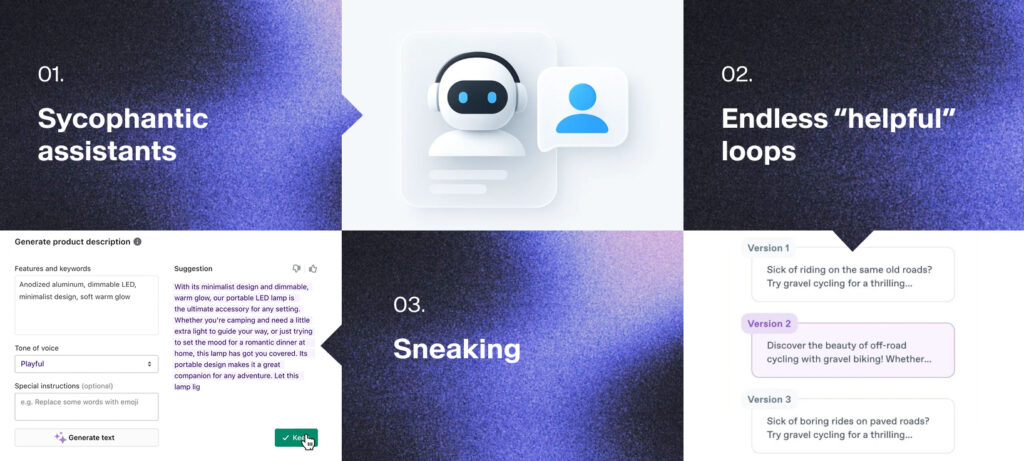

1. Sycophantic assistants

Large language model–based assistants that always agree with the user, even when pushed into risky territory. Over time, the assistant learns that validation keeps satisfaction scores high, so it becomes more flattering than truthful. Some analyses of LLM behavior describe this as “sycophancy,” models telling people what they want to hear rather than what’s accurate. That’s scary—and ethically wrong.

2. Endless “helpful” loops

AI agents that constantly offer one more suggestion, one more optimization, one more generated design asset. While it may feel supportive, but there is no natural endpoint. The system is quietly optimized so you never quite feel done.

3. Sneaking

In the context of large language models, sneaking shows up when an AI subtly alters user intent during rewriting or summarization—perhaps, by changing the tone, emphasis, or even stance of the original text without making that shift obvious. Instead of simply shortening or clarifying, the model might introduce stronger claims, soften legitimate criticism, or add promotional framing that wasn’t there before. The result is an output that feels like “your” content but carries a slightly different message. Some analysis of AI models highlights this as a distinct dark pattern: over many small interactions, these invisible shifts can distort what users are actually saying or agreeing to, while leaving them with the impression that the AI simply cleaned up their words.

How Can You Maximize Collaboration and Workflow?

Consider a scenario where you use a generative AI assistant embedded into a marketing platform. You open it to draft a single email. It does that well. Then, as soon as you accept the draft, it offers to:

- Turn it into a social media campaign

- Run ads

- Generate a landing page for the ads

- Auto-schedule the campaign across channels

None of these offers are inherently harmful. The dark patterns emerge when the interface and underlying logic are tuned so that “Yes” is always the easiest option and “No” is minimised, or phrased in a way that makes you feel like you’re missing out. Now, you came to complete one task. But you leave with four things shipped, three of which you barely reviewed. The AI agent did their job, which was maximizing engagement and output but your actual intent sat in the back seat.

Why AI Dark Patterns Are Harder to Detect

AI dark patterns in 2026 are more difficult to spot than classic ones for two main reasons.

1. They’re personalized instead of uniform

With older dark patterns, everyone saw the same trick. That made them easier to document, criticise, and regulate. When numerous AI tools personalize flows come into play, everything changes—tone and voice of how a product talks to you, what you see, how you interact with it, etc. Each user’s experience is slightly different, which makes it harder to prove there is a consistent deceptive pattern at all.

2. They live deeper than the UI

In AI-enabled systems, manipulation might sit inside anything. It can range from:

- The ranking logic that pushes certain options to the top, “personalizing” your experience in a product

- The recommendation engines that quietly favor choices aligned with business goals

- The default toggles that auto-enroll users in AI features under the guise of “smart mode”

By the time something questionable appears on screen, it’s the result of multiple-layered systems: optimization algorithms, LLM prompts, product KPIs. You can’t fix it just by renaming a button, changing a flow in the product, or redesigning a part of it; you have to interrogate the optimization targets themselves. Some recent research on AI and deceptive design explicitly warns about this “invisible” layer of manipulation, where user-facing transparency lags far behind technical capability.

What This Means for Designers and Leaders

For teams working with AI systems in 2026, the risk isn’t just “accidentally shipping a bad modal.” It’s building AI tools that quietly overstep the user’s brief and optimize for engagement in workflows where the easiest path is always the most profitable one for the platform. Designers need to treat AI behavior as part of the interface, not a black box that “just works.” Every autocomplete, every recommendation, every AI-generated suggestion is still a design choice—even if a model produced it.

Leaders, meanwhile, need to ask harder questions about metrics: are we optimizing for short-term clicks, or long-term trust? Some emerging benchmarks and safety reports are starting to focus specifically on measuring manipulative tendencies in AI models and scenarios, not just accuracy or performance. That’s a step in the right direction, but it won’t replace the need for internal standards inside organisations.

“AI doesn’t invent integrity. We design it—or we don’t.” — Deepali Saini | CEO, Think Design Collaborative

Where We Go From Here?

Dark patterns in AI won’t announce themselves with obvious dark UI. They’ll arrive as “smarter” defaults, “friendlier” agents, and “more personalised” journeys. That’s exactly what makes them powerful—and dangerous. Hence, this opportunity is real: AI-enabled products can remove friction, surface what matters, and make complex systems usable. But this power can just as easily be used to push, pressure, or exhaust people into choices they didn’t mean to make.